AI anxiety

The monsters in my nightmares have morphed from the supernatural kind, inspired by the imagination of childhood wiles to a more sinister type of evil: artificial intelligence.

The manifestation of this fear in my dreams is clear evidence of the deep root AI has taken in my subconscious already and I’m dreading the day it takes over entirely. Call me dramatic but I did recently watch Spike Jonzes' movie ‘Her’ where the protagonist falls in love with an operating system. This premise doesn’t seem at all far-fetched considering the accuracy with which AI is able to emulate the human mind. One of my friends uses ChatGPT for her math homework so often, we joke it's her boyfriend. “She's probably texting her boyfriend” translates to “She's definitely texting her AI chatbot”. It's easy to forget your Snapchat AI is just a screen and fall prey to the false possibility of more, especially in today’s world where human connection is rare.

What scares me most is what the excessive use of AI means for the future. As people fall into the trap of using AI instead of their own brains, we will inevitably move away from meritocracy into a debilitating culture of dependency. Personally, losing the ability to think does not sound appealing. Maybe this is existential, maybe I’m being nihilistic. This isn't to say AI isn't helpful for minor clerical technical tasks. I’m guilty of using it on occasion, too. My issue is when it comes to creation.

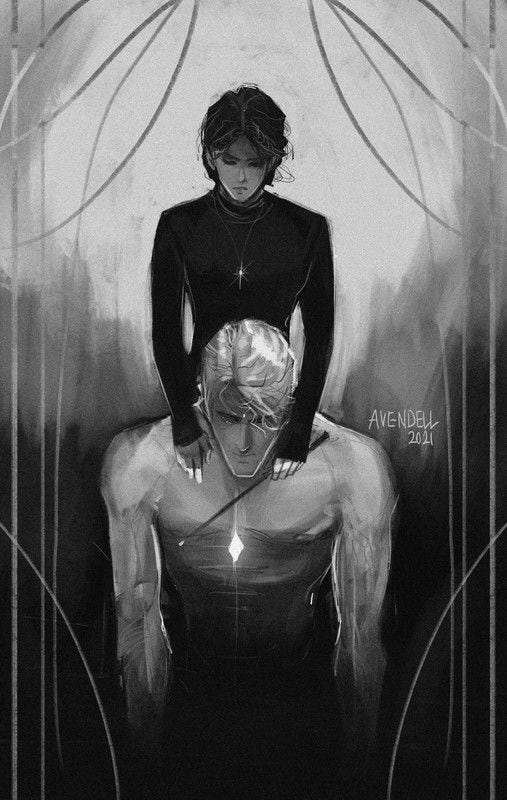

The best example I can give is of fan art. I was (and still am) a wattpad child through and through. I live for when a good Dramione or Marauders edit comes up on my TL. It feels like I'm part of a niche group united via shared obsession. So what happens when the content becomes AI generated? The human connection that made me feel like I was part of something disappears. What's worse is when sometimes I can't tell the difference between the AI and non AI generated art.

As I fell into a research tunnel fueled by my AI existentialism, I found several findings linking AI anxiety to fear acquisition theory, finding them reflective of my own experiences. To summarise: AI can arise through these four pathways: conditioning to AI invasion of privacy, an innate fear of AI's opacity, information/instruction from AI science fiction, and vicarious exposure to AI replacing human work,

Conditioning to AI invasion of privacy? Check. AI opacity? Check. AI sci fi? Check.

Replacing human work? There it is. The bane of my existence. Check x1000. x300 million to be exact, because that's how many jobs AI will replace according to Goldman Sachs.

It's like being thrown into the deep end of an empty pool. Journalism is my passion and it's apparently already a dying art. Do I even have a reason to pursue a degree in what I love if I’m just going to be broke and homeless post-grad? What even is the point of trying, then? – this is the cognitive spiral I’m currently absorbed in.

Whenever I express these feelings to proponents of AI usage (aka my father), I'm met with the response that the development should be welcomed, the primary argument being we use computers the same way, and no one really has a problem with those. But to me there is a clear distinction visible. While a computer’s operation is mechanical and imperative, AI makes autonomous decisions, operating independently of humans.

There is a power imbalance here that's reminiscent of the kind I experienced with movies as a child; before going to the cinema, I’d have to google the entire plot of the movie simply because I hated the idea of an electronic screen having more information than me. So as a control freak here’s how I see it: By enabling AI, you disable yourself. The entire creation of AI was based on a model that is meant to mimic (steal) human creation. While you may provide AI with prompts, the real work is being done by the machine. What does that make you? Brainless, and consequently worthless.

Even with such extreme levels of AI anxiety, I am well aware that quitting the use of AI is basically an impossible task. It is too rapidly developing and too deeply integrated. When you google something, the first thing that comes up is Gemini’s AI overview. Apple plans to embed AI into future iPhones. This superintelligence is literally inescapable. The best we can do is use it mindfully. As I understand it, this means being aware of the ethical and environmental consequences of AI uses, especially for creative purposes–including art and writing. Don't ask ChatGPT what song has the lyrics “—“. Shazam is free. Don't use it for making your grocery lists, either.